If you develop Android applications using AI, Google's latest benchmark simplifies the process of selecting the appropriate model.

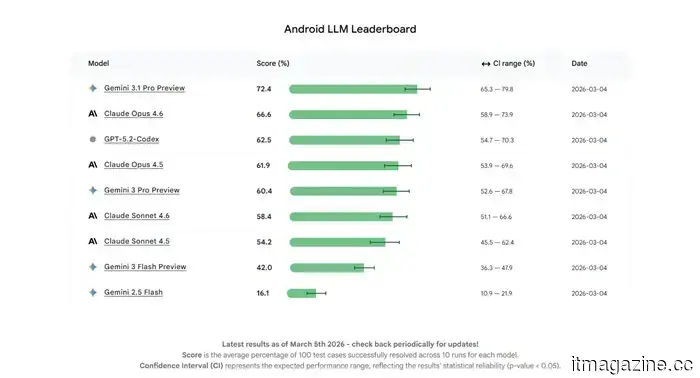

Android Bench assesses the performance of various AI models in real-world Android coding tasks.

For Android app developers utilizing AI for coding, selecting the appropriate model can be a challenge. Not all models are created equal, and several may not be specifically tailored for Android development processes. To tackle this issue, Google has launched a new benchmark to assist developers in understanding how effectively different AI models perform in practical Android coding scenarios.

Called Android Bench, this benchmark is intended to evaluate large language models (LLMs) and their ability to manage common Android development tasks. Google clarifies that the benchmark uses real-world tasks from public projects on GitHub, requiring models to simulate actual pull requests and resolve issues akin to those developers face when creating Android apps. The outcomes are then verified to confirm that they genuinely resolve the respective issues.

When faced with numerous options, selecting the optimal AI model for a task can be daunting, which is why the industry turns to LLM benchmarks for direction. However, the challenge for Android developers lies in the fact that these benchmarks do not adequately assess the specific tasks they encounter. In simpler terms, the benchmark determines whether the code produced by the AI models truly addresses the problem rather than merely appearing correct. This allows Google to evaluate the practical utility of various models in resolving genuine Android development challenges.

With the inaugural version of Android Bench, Google aimed “to solely assess model performance without concentrating on agency or tool use.” The results reveal a significant range, with models achieving successful completions of benchmark tasks between 16% and 72%. The company believes that sharing these results will facilitate comparisons among models and help developers identify those capable of managing authentic Android coding challenges.

Beyond aiding developers, the benchmark may incentivize AI companies to enhance their models' comprehension of Android development. To support this initiative, Google has made Android Bench’s methodology, dataset, and testing framework publicly available on GitHub. Over time, this could result in AI tools that are better suited for navigating intricate Android codebases, thereby helping developers build and fix apps more efficiently.

Pranob is an experienced tech journalist with over eight years in the consumer technology sector. His work has…

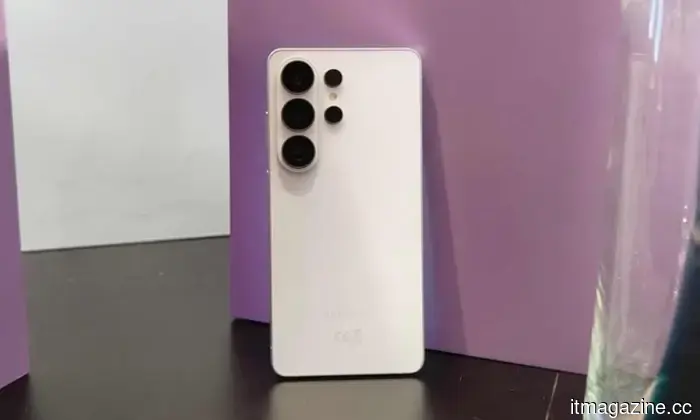

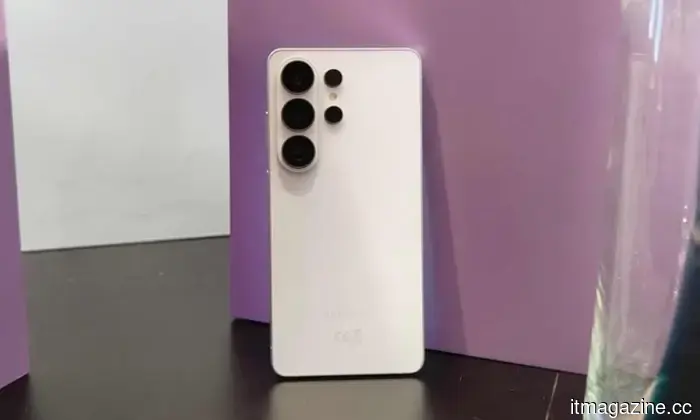

Samsung's latest flagship, the Galaxy S26 Ultra, introduces improvements to battery and charging capabilities. There is a slight increase in battery size and charging speeds, with wireless charging upgraded to 25W. This enhancement marks a significant rise from the previous standard of 15W across Samsung’s premium range. However, achieving these speeds might be more challenging than anticipated.

A recently identified issue in Android 16 is alarmingly affecting security experts and VPN providers, indicating that a system-level bug may be silently interfering with VPN connections on impacted devices. This problem has reportedly persisted for several months, potentially leaving users unknowingly vulnerable while they assume their internet traffic remains secure.

Samsung has launched a new initiative to attract more customers to the Galaxy S26 series in one of its primary markets. Through a recent press release, the company introduced the “Galaxy Forever” program in India. Despite the potentially confusing name, it essentially serves as an ownership or periodic upgrade scheme, allowing buyers to acquire the Galaxy S26 Ultra (priced from $1502) or Galaxy S26 Plus (starting at $1,288) by paying 50% of the device's price upfront and spreading the rest over 12 interest-free monthly installments. The regular Galaxy S26 is not included in this offer.

Other articles

iRU hides Tactio 515 behind the screen

The Russian company iRU has solved the problem of a constantly cluttered desk by releasing a device that is practically invisible on the office table. More precisely, it attaches to the back of the monitor. The Tactio 515 is a nettop for those who value every centimeter of their workspace.

iRU hides Tactio 515 behind the screen

The Russian company iRU has solved the problem of a constantly cluttered desk by releasing a device that is practically invisible on the office table. More precisely, it attaches to the back of the monitor. The Tactio 515 is a nettop for those who value every centimeter of their workspace.

T-Mobile's latest promotion for 5G Home Internet offers up to $300 in rebates.

If you've been thinking about changing from conventional cable, T-Mobile 5G Home Internet's latest promotion could be the strongest incentive to do so. The provider is giving new customers up to $300 back, based on the plan selected. With an easy setup, unlimited data, and no contracts, this limited-time deal is among the most straightforward and [...]

T-Mobile's latest promotion for 5G Home Internet offers up to $300 in rebates.

If you've been thinking about changing from conventional cable, T-Mobile 5G Home Internet's latest promotion could be the strongest incentive to do so. The provider is giving new customers up to $300 back, based on the plan selected. With an easy setup, unlimited data, and no contracts, this limited-time deal is among the most straightforward and [...]

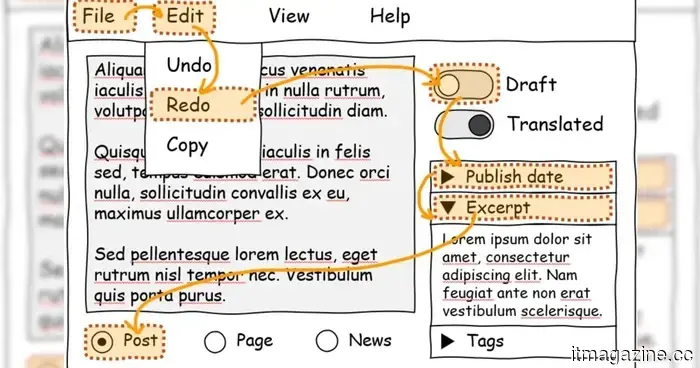

Microsoft's latest browser feature will enhance the keyboard accessibility of websites.

Many websites struggle with basic keyboard navigation. Microsoft's new browser tool aims to address this issue, and it requires just a single HTML attribute to implement.

Microsoft's latest browser feature will enhance the keyboard accessibility of websites.

Many websites struggle with basic keyboard navigation. Microsoft's new browser tool aims to address this issue, and it requires just a single HTML attribute to implement.

If you develop Android apps using AI, Google's new benchmark simplifies the process of selecting the appropriate model.

For Android app developers who depend on AI for coding, selecting the appropriate model can be challenging. Not every model is created equal, and many lack specific training for Android development processes. To help with this issue, Google has launched a new benchmark aimed at assisting developers in assessing the performance of various AI models in real-world Android scenarios.

If you develop Android apps using AI, Google's new benchmark simplifies the process of selecting the appropriate model.

For Android app developers who depend on AI for coding, selecting the appropriate model can be challenging. Not every model is created equal, and many lack specific training for Android development processes. To help with this issue, Google has launched a new benchmark aimed at assisting developers in assessing the performance of various AI models in real-world Android scenarios.

MAIBENBEN has gathered a "cube" for non-trivial tasks.

The company decided that creative people and those who work with big data need a special gift and introduced the MAIBENBEN PC95A workstation. Inside this monolithic cube resides a powerful AMD Ryzen AI MAX+ 395 processor with AMD Radeon 8060S graphics. Judging by the specifications, the device is designed for those who locally "communicate" with large language models, process terabytes of data, or engage in complex 3D rendering. The new product comes with a two-year warranty - apparently, there is confidence in the product.

MAIBENBEN has gathered a "cube" for non-trivial tasks.

The company decided that creative people and those who work with big data need a special gift and introduced the MAIBENBEN PC95A workstation. Inside this monolithic cube resides a powerful AMD Ryzen AI MAX+ 395 processor with AMD Radeon 8060S graphics. Judging by the specifications, the device is designed for those who locally "communicate" with large language models, process terabytes of data, or engage in complex 3D rendering. The new product comes with a two-year warranty - apparently, there is confidence in the product.

If you develop Android applications using AI, Google's latest benchmark simplifies the process of selecting the appropriate model.

For Android app developers using AI for coding, selecting the appropriate model can be challenging. Not every model is created equal, and many lack specific training for Android development processes. To resolve this issue, Google has launched a new benchmark aimed at helping developers assess the performance of various AI models in real-world Android scenarios.