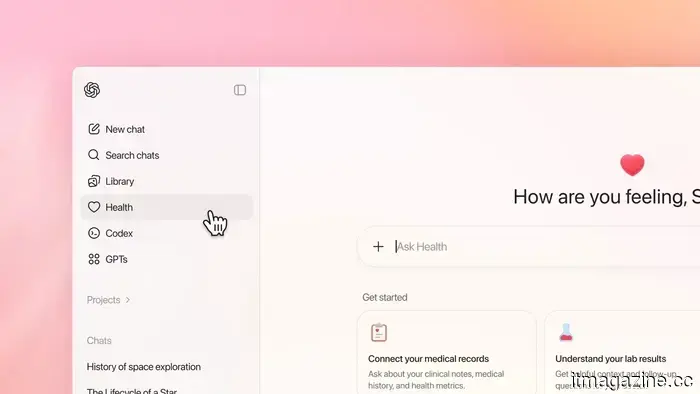

ChatGPT Health is here.

I’ve mentioned this numerous times: the products available in the market are seldom revolutionary innovations. More often, they serve as reflections of people's habits, shortcuts, fears, and everyday behaviors. Design is influenced by behavior. It always has been.

Consider this: you likely know someone who consistently uses ChatGPT for health-related inquiries. Not just occasionally, but regularly, as a second opinion, or as a source to gauge concerns before voicing them. At times, it even acts as a therapist, a confidant, or a non-judgmental space.

When these habits become established, companies shift from observation to construction. At this juncture, users transition from simple customers to co-creators, with their behaviors subtly shaping the product's development.

This context is important in light of OpenAI's announcement on January 7, 2026: the introduction of ChatGPT Health, a specialized AI-powered service centered on healthcare. OpenAI characterizes it as “a dedicated experience that securely integrates your health information with ChatGPT’s intelligence.”

Access is currently limited. The company is implementing it through a waitlist, with a gradual rollout planned over the upcoming weeks. Anyone with a ChatGPT account, either free or paid, can register for early access, except users in the EU, UK, and Switzerland, where regulatory issues remain unresolved.

The stated objective is straightforward: to empower individuals to feel more informed, prepared, and confident in managing their health. However, the statistics behind this decision reveal more than the typical press language. OpenAI reports that over 230 million people globally turn to ChatGPT for health or wellness questions weekly.

This raises uncomfortable questions.

Why do so many individuals seek health information from an AI? Is it due to speed? The promise of an instant answer? The shift in our expectations toward immediate clarity, even regarding complex or sensitive matters? Is it discomfort or hesitance to discuss certain topics openly with a doctor? Or is it a deeper issue, a gradual loss of trust in human systems, coupled with a growing reliance on machines that offer non-judgmental, uninterrupted responses?

ChatGPT Health does not provide answers to these inquiries. It merely formalizes the existing behavior.

According to OpenAI, the new Health feature enables users to securely link their personal health information, including medical records, lab results, and data from fitness or wellness applications. For example, someone might upload recent blood test results to ask for a summary, or connect a step counter to compare their activity with prior months.

The system can integrate data from various platforms such as Apple Health, MyFitnessPal, Peloton, AllTrails, and even grocery data from Instacart. The promise is to provide contextual responses rather than generic advice, offering clear explanations of lab results, highlighting trends over time, and suggesting questions to ask during medical appointments, alongside diet or exercise recommendations based on the data.

What ChatGPT Health intentionally avoids doing is just as important as what it is designed to do.

OpenAI makes it clear: this tool is not a source of medical advice. It does not diagnose illnesses or prescribe treatments. It is meant to support care, not replace it. The framing is purposefully constructed. ChatGPT Health presents itself as an assistant rather than an authority, aiming to help users understand patterns and prepare for discussions, rather than making decisions independently.

This distinction is vital. It's one I hope users take seriously.

In the background, OpenAI asserts that the system was developed under significant medical oversight. Over the past two years, more than 260 physicians from approximately 60 countries evaluated responses and provided feedback, contributing over 600,000 individual assessments. The emphasis was not solely on accuracy but also on tone, clarity, and knowing when to encourage users to seek professional care.

To aid this process, OpenAI created an internal evaluation framework named HealthBench. This framework assesses responses based on clinician-defined standards, including safety, medical accuracy, and appropriateness of guidance. It's an effort to introduce structure in a field where nuances are crucial, and errors can have serious consequences.

Privacy is another critical aspect that OpenAI is keen to highlight. ChatGPT Health operates in a distinct, isolated environment within the app. Health-related discussions, linked data sources, and uploaded files remain completely separate from standard chats. Health data is not included in the general ChatGPT memory, and exchanges within this environment are encrypted.

OpenAI also states that conversations regarding health are not utilized to train its core models. Whether this assurance will suffice for all users is uncertain, but the structural separation reflects an understanding of how sensitive this area is.

In the United States, the system takes it a step further. Through a partnership with b.well Connected Health, ChatGPT Health can access genuine electronic health records from thousands of providers, with user consent. This enables it to summarize official lab reports or condense lengthy medical histories into easily readable formats. Outside the US, functionality is more limited, mainly due to regulatory variances

ChatGPT Health is here.

OpenAI introduces ChatGPT Health, a novel feature that links personal health information with AI insights, allowing users to gain a better understanding of their health.