The shocking lawsuit against OpenAI alleges that your conversations with ChatGPT were shared with Google and Meta.

A class action lawsuit claims that OpenAI allowed Google and Meta trackers to gather sensitive user information

A recent privacy lawsuit involving ChatGPT alleges that OpenAI improperly shared user prompts and identifiable information with Google and Meta's tracking tools without obtaining proper consent.

The class action, filed in California and reported by Futurism, asserts that data associated with ChatGPT users—including chat queries, emails, and user IDs—passed through tools like Meta Pixel and Google Analytics. The lawsuit claims that this action violated California privacy laws and federal wiretap regulations.

The implications are notably personal. Individuals use ChatGPT for various reasons, including work, health inquiries, financial issues, legal assistance, and emotional support. The lawsuit places these discussions at the forefront of a debate regarding the limits of web-tracking systems.

Data Movement Explained

The complaint focuses on tracking systems that aid companies in measuring user activity and facilitating targeted advertising. It specifically mentions Meta Pixel and Google Analytics, arguing that tools designed for the broader internet pose a heightened privacy risk when involved in chatbot interactions.

The core issue lies in the combination of user prompts with identifying information like emails and user IDs. A single prompt can disclose sensitive information. When linked to a specific individual, it could contribute to a profile that follows a user beyond a single chat session.

Increased Impact

ChatGPT can capture unfinished thoughts and private information that individuals usually do not enter into a typical search box. Users seek assistance with messages, health symptoms, workplace difficulties, financial choices, and personal anxieties. This context amplifies the strength of the privacy claims.

OpenAI’s privacy policy indicates that it collects, stores, and shares certain user data. However, the lawsuit contends that the company has overstepped legal boundaries by allowing such tracking without necessary consent. There can be a significant gap between privacy policy language and genuine informed consent.

What Users Should Do

The allegations remain unproven, and the lawsuit is still progressing through the legal system. OpenAI has not yet provided a comment in response to the request noted in the source report. Nonetheless, the lawsuit reinforces a familiar caution: while AI chats may seem confidential, the underlying technology operates on standard internet systems.

For now, exercising caution is advisable. Avoid inputting names, account numbers, medical details, legal information, or financial specifics into ChatGPT unless you are comfortable with the associated privacy risks. Always assume that your prompts could contribute to a broader data trail.

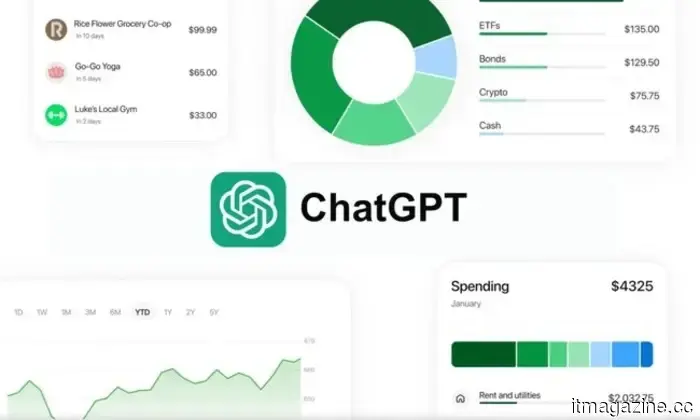

ChatGPT can now give finance tips if you link your bank account.

ChatGPT has introduced a personal finance feature that allows it to access your bank account for spending analysis and financial planning. OpenAI is currently piloting this feature for Pro subscribers in the U.S. at a subscription rate of $200 per month, with plans to extend it to Plus users after collecting initial feedback.

This feature enables you to connect your financial accounts via Plaid, which links bank applications with third-party services and works with over 12,000 institutions, including Chase, Fidelity, Schwab, and American Express.

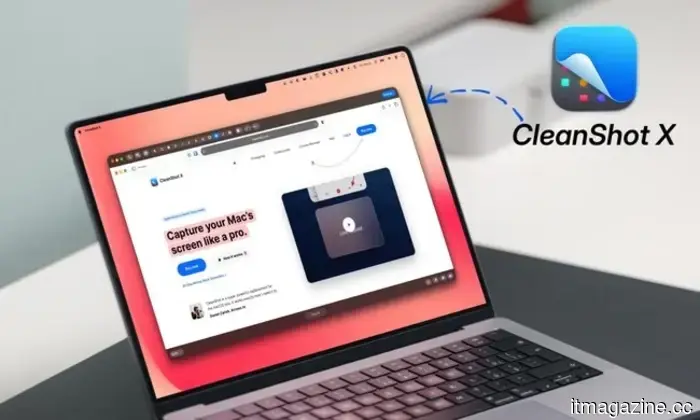

Your Mac's built-in screenshot tool may be limiting you. It's time for an upgrade.

macOS comes with a fundamental screenshot tool that covers basic needs, allowing you to capture screenshots, record your screen, and annotate images. However, when you seek additional functionalities, like scrolling capture, advanced annotation options, or a quick sharing mechanism for your screenshots, its limitations become apparent.

Enter CleanShot X. This robust screenshot and screen recording application for Mac replaces the default tool and includes all the features that users find lacking in the built-in version.

Research indicates that AI agents designed for routine computer tasks may be problematic.

Recent research from UC Riverside reveals that AI agents meant to perform everyday computer functions face significant contextual challenges.

The study evaluated 10 agents and models from major developers, including OpenAI, Anthropic, Meta, Alibaba, and DeepSeek, and found that these agents made undesirable or potentially harmful decisions 80% of the time, leading to digital damage 41% of the time.

Other articles

In 2026, the HomePod mini remains a sensible choice if you are already integrated into Apple's ecosystem.

The HomePod mini has seen little change over the years, but it continues to deliver respectable sound quality, effortless integration with Apple devices, and an unexpectedly good experience with the Apple TV 4K. However, the downside is that many of its top features are best utilized within Apple’s ecosystem.

In 2026, the HomePod mini remains a sensible choice if you are already integrated into Apple's ecosystem.

The HomePod mini has seen little change over the years, but it continues to deliver respectable sound quality, effortless integration with Apple devices, and an unexpectedly good experience with the Apple TV 4K. However, the downside is that many of its top features are best utilized within Apple’s ecosystem.

Eighteen48 Partners completes EUR175M initial tranche for its European mid-market buyout fund.

Eighteen48 Partners, located in London, has successfully raised EUR175M for its inaugural private equity fund, which aims for a total of EUR350M. The firm focuses on supporting mid-market buyouts that are sourced through independent sponsors throughout Europe.

Anthropic has partnered with the Gates Foundation, investing $200 million to implement AI in global health, education, and agriculture.

The four-year collaboration will support vaccine research for overlooked diseases, AI tutoring initiatives in sub-Saharan Africa, and public benchmarks. It far exceeds OpenAI's $50 million agreement with the Gates Foundation.

Eighteen48 Partners completes EUR175M initial tranche for its European mid-market buyout fund.

Eighteen48 Partners, located in London, has successfully raised EUR175M for its inaugural private equity fund, which aims for a total of EUR350M. The firm focuses on supporting mid-market buyouts that are sourced through independent sponsors throughout Europe.

Anthropic has partnered with the Gates Foundation, investing $200 million to implement AI in global health, education, and agriculture.

The four-year collaboration will support vaccine research for overlooked diseases, AI tutoring initiatives in sub-Saharan Africa, and public benchmarks. It far exceeds OpenAI's $50 million agreement with the Gates Foundation.

Impressed by AI agents that use computers? Studies indicate they can be “digital disasters,” even for simple tasks.

Recent research from UC Riverside discovered that AI agents used in computers frequently pursue unsafe or illogical tasks, prompting concerns about the readiness of current desktop agents for delicate daily operations.

China is at the forefront of the agentic commerce competition as Alibaba, Meituan, and JD.com implement AI shopping agents on a large scale.

Alibaba has incorporated Qwen AI with Taobao's 4 billion items. Alipay handled 120 million transactions via AI agents in a single week. China is redefining the landscape of e-commerce.

Anthropic has partnered with the Gates Foundation, investing $200 million to implement AI in the areas of global health, education, and agriculture.

The four-year collaboration will support vaccine research for overlooked diseases, AI education in sub-Saharan Africa, and public benchmarks. This partnership significantly surpasses OpenAI's $50 million agreement with the Gates Foundation.

Impressed by AI agents that use computers? Studies indicate they can be “digital disasters,” even for simple tasks.

Recent research from UC Riverside discovered that AI agents used in computers frequently pursue unsafe or illogical tasks, prompting concerns about the readiness of current desktop agents for delicate daily operations.

China is at the forefront of the agentic commerce competition as Alibaba, Meituan, and JD.com implement AI shopping agents on a large scale.

Alibaba has incorporated Qwen AI with Taobao's 4 billion items. Alipay handled 120 million transactions via AI agents in a single week. China is redefining the landscape of e-commerce.

Anthropic has partnered with the Gates Foundation, investing $200 million to implement AI in the areas of global health, education, and agriculture.

The four-year collaboration will support vaccine research for overlooked diseases, AI education in sub-Saharan Africa, and public benchmarks. This partnership significantly surpasses OpenAI's $50 million agreement with the Gates Foundation.

The shocking lawsuit against OpenAI alleges that your conversations with ChatGPT were shared with Google and Meta.

A recent class action lawsuit alleges that OpenAI shared ChatGPT prompts and user identifiers with trackers from Google and Meta, highlighting new privacy issues related to private conversations with the chatbot.