AI has amplified the most harmful types of abusive content online, and guardians are struggling to keep pace.

Digital Trends might receive a commission if you make purchases through links on our website. Why should you have confidence in us?

AI is contributing to the surge of child abuse content online

Artificial intelligence has undeniably provided many beneficial tools for the internet. However, it has also given a horrifying boost to one of the most terrible forms of abuse. Recent reports and findings from watchdog organizations highlight a disturbing trend where generative AI is enabling offenders to produce child sexual abuse imagery on a much larger scale.

These images are becoming increasingly lifelike and are being presented in formats that pose significant challenges for platforms, regulators, and child-safety organizations.

The escalating scale and extremity of AI-generated content

Frank Ching / Unsplash

In February, Reuters reported that actionable alerts regarding AI-generated child sexual abuse imagery had more than doubled in the past two years. Additionally, the Internet Watch Foundation later disclosed that it identified 8,029 AI-generated images and videos of child sexual abuse in just 2025. This troubling reality was further examined in a Bloomberg report detailing how generative AI is transforming the child sexual abuse material landscape in the United States.

Investigators now face challenges beyond just AI-generated pornographic images and videos; they are also encountering manipulated images of actual children and chatbot interactions where alleged offenders seek grooming advice or role-play scenarios involving sexual abuse. Meanwhile, law enforcement is consumed with determining whether a child depicted in an image is real, digitally modified, or entirely fictitious.

Actual cases are becoming increasingly alarming

One notable case in Minnesota involves William Michael Haslach, a school lunch monitor and traffic guard accused of utilizing AI tools to digitally undress children in photographs he had taken while at work. Federal agents uncovered over 90 victims and discovered nearly 800 AI-generated abuse images on his devices, illustrating how offenders are increasingly leveraging ordinary photos from social media to create explicit content.

Investigators are overwhelmed by the sheer volume and misleading leads

The situation is deteriorating rapidly. Bloomberg reveals that NCMEC received 1.5 million reports of AI-linked CSAM in 2025, a stark increase from 67,000 the previous year and just 4,700 in 2023. Simultaneously, investigators report that automated moderation systems are generating a surge of irrelevant leads, inundating task forces that are already stretched thin. Each erroneous tip consumes precious time that could have been directed toward addressing immediate threats to children.

Vikhyaat Vivek is a tech journalist and reviewer with seven years of experience in consumer hardware coverage, focusing on...

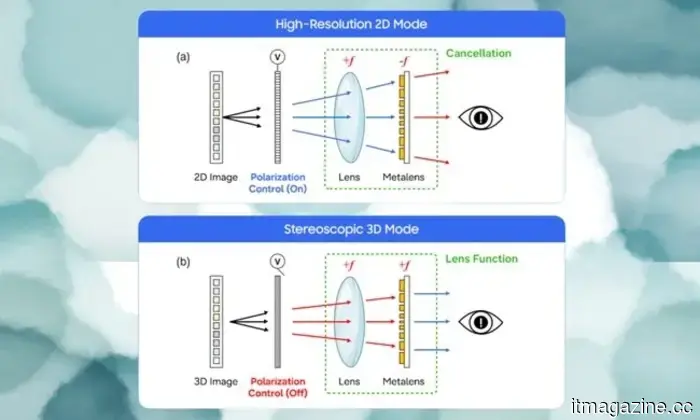

Samsung's new display technology allows switching between 2D/3D on OLED panels, without the need for glasses

Samsung's 2D/3D metalens technology enables 3D viewing without glasses, making it practical, wide-angle, and compatible with the OLED screens currently found in mobile phones.

Samsung Research, in collaboration with POSTECH in South Korea, has published a study in the journal Nature outlining a breakthrough in display technology. The company is developing a switchable 2D/3D system that operates without glasses or eye-tracking systems.

This system does not significantly increase the size of the display; it is designed around a Metasurface Lenticular Lens, a very thin optical layer just 1.2mm thick, composed of nanoscale structures that manipulate light behavior—and thus affect our perception of the light emitted from the display.

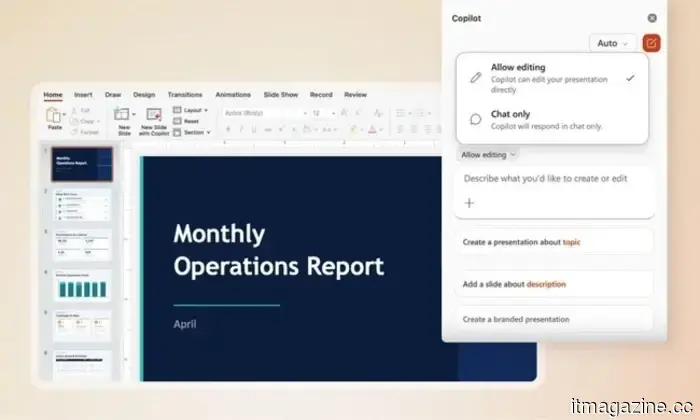

Microsoft Copilot can now perform actual tasks within your Word, Excel, and PowerPoint files

Copilot can now make edits to your spreadsheets and presentations.

Microsoft is introducing a new feature for Office users this week. The company has launched Agent Mode in Word, Excel, and PowerPoint, a more robust version of the Copilot experience that Microsoft refers to as "vibe working." This has become the default experience for Microsoft 365 Copilot and Microsoft 365 Premium subscribers, and it is also accessible to Microsoft 365 Personal and Family plan users.

Google Meet will soon take notes for you, even during in-person meetings

The "Take Notes for Me" feature for in-person meetings indicates that Google aspires for Gemini to extend beyond a single app and serve as an AI layer across all conversations you participate in, no matter where they occur.

If you've ever left a meeting unable to recall the key points discussed and ended up with a page filled with disorganized notes, Google has created a solution for you. At Google Cloud Next 2026 in Las Vegas, the company announced a significant enhancement to the "Take Notes for Me" feature in Google Meet. This functionality now works whether you're on a video call or attending a physical conference with others.

Other articles

The Pentagon has chosen three microreactor companies for use at Air Force bases as the military's nuclear program progresses towards 2030.

The US military has reduced its ANPI microreactor program from eight companies down to three, aiming to have nuclear-powered Air Force bases at Buckley SFB and Malmstrom AFB by 2030.

The Pentagon has chosen three microreactor companies for use at Air Force bases as the military's nuclear program progresses towards 2030.

The US military has reduced its ANPI microreactor program from eight companies down to three, aiming to have nuclear-powered Air Force bases at Buckley SFB and Malmstrom AFB by 2030.

How AI Is Transforming Workers' Compensation Claims and Healthcare Processes

Workers' compensation is advancing as artificial intelligence enhances claims processing, decision-making, and access to medical services. Here’s how businesses such as Claim Clarity are tackling this issue.

How AI Is Transforming Workers' Compensation Claims and Healthcare Processes

Workers' compensation is advancing as artificial intelligence enhances claims processing, decision-making, and access to medical services. Here’s how businesses such as Claim Clarity are tackling this issue.

SoftBank is looking for a $10 billion margin loan collateralized by OpenAI shares at a rate of SOFR+425 basis points as its leverage structure becomes more complex.

SoftBank is securing a $10 billion loan using its stake in OpenAI as collateral, with a spread nearly three times higher than that of its 2018 margin loan with Alibaba. S&P has downgraded its credit outlook to negative.

Zapata Quantum has secured $15 million following its exit from bankruptcy.

Zapata Quantum has secured $15 million after narrowly avoiding liquidation in 2024 and undergoing a two-phase restructuring that tackled $18.7 million in debt.

SoftBank is looking for a $10 billion margin loan collateralized by OpenAI shares at a rate of SOFR+425 basis points as its leverage structure becomes more complex.

SoftBank is securing a $10 billion loan using its stake in OpenAI as collateral, with a spread nearly three times higher than that of its 2018 margin loan with Alibaba. S&P has downgraded its credit outlook to negative.

Zapata Quantum has secured $15 million following its exit from bankruptcy.

Zapata Quantum has secured $15 million after narrowly avoiding liquidation in 2024 and undergoing a two-phase restructuring that tackled $18.7 million in debt.

Musk’s SpaceX is considering GPU manufacturing due to difficulties with Nvidia’s supply.

SpaceX aims to develop its own GPUs, but the process of chip manufacturing is quite challenging. Here's what we currently know.

Musk’s SpaceX is considering GPU manufacturing due to difficulties with Nvidia’s supply.

SpaceX aims to develop its own GPUs, but the process of chip manufacturing is quite challenging. Here's what we currently know.

AI has amplified the most harmful types of abusive content online, and guardians are struggling to keep pace.

Watchdog data indicates that generative AI is accelerating the creation of child sexual abuse imagery, complicating law enforcement efforts and posing significant challenges for regulators to manage.