DeepL introduces real-time voice-to-voice translation in more than 40 languages.

The translation company based in Cologne, known for its text tools, has introduced a comprehensive voice product suite designed for meetings, conversations, group interactions, and an API for enterprise integration. A live demonstration in Seoul showed a delay of one to two sentences, and DeepL’s Chief Product Officer acknowledged that differences in word order among languages pose a significant challenge.

DeepL, the Cologne-based AI language firm recognized for its high-quality text translation, has launched DeepL Voice-to-Voice: a real-time spoken translation suite tailored for live business communications.

The product encompasses four specific use cases: virtual meetings, mobile and web conversations, group settings for frontline employees, and enterprise applications via an API. It supports over 40 languages, including all 24 official languages of the EU, along with Vietnamese, Thai, Arabic, Norwegian, Hebrew, Bengali, and Tagalog.

The suite consists of four components, each at varying stages of availability. Voice for Conversations, which allows for real-time translation across mobile and web platforms without app installation, is now generally accessible.

Voice for Meetings, which integrates with Microsoft Teams and Zoom so that participants can converse in their native languages while hearing simultaneous translations in their own, will begin an early access program in June. The Voice-to-Voice API, which enables businesses to incorporate DeepL’s translation engine into their customer-facing applications like call centers, is currently in early access. A customization feature known as Spoken Terms, which helps the system learn specific vocabulary from various industries, company names, and personal names, is set to be generally available on May 7.

Jarek Kutylowski, DeepL’s founder and CEO, characterized the launch as an achievement in "exploring new frontiers in translation.”

“DeepL Voice-to-Voice enables natural dialogue in one’s own language without the barriers or costs associated with interpreters,” he stated.

DeepL is positioning this product as an enterprise solution rather than a consumer offering: the company has emphasized that its voice technology does not utilize customer data for training its models and does not permanently keep transcription or translation data post-call. This security aspect differentiates it from consumer AI voice products and targets regulated sectors.

The current system operates through a three-step process: speech is converted to text, which is then translated via DeepL’s renowned translation engine, and finally, the translated text is converted back into speech.

DeepL's competitive edge hinges on the quality of this translation stage: the company claims its text translation models are superior to alternatives, an advantage that extends to the voice output.

In blind evaluations commissioned by DeepL and conducted independently by Slator, a language industry research firm, 96% of professional linguists preferred DeepL Voice over the native translation tools in Google Meet, Microsoft Teams, and Zoom, highlighting its superior fluency and contextual accuracy. DeepL Voice received scores of 96.4 out of 100 for Zoom and 96.3 for Microsoft Teams.

However, a live demonstration by Chief Product Officer Gonzalo Gaiolas during the DeepL Connect Seoul event on April 15 revealed a current limitation: a noticeable delay of one to two sentences between the conclusion of the speaker’s input and the delivery of the translation.

Gaiolas directly acknowledged this delay: “Different languages have different word orders and sentence structures, which results in delays in real-time interpretation,” he mentioned, as reported by Seoul Economic Daily.

The company aims to mitigate latency through ongoing model enhancement. In terms of voice quality, the existing system utilizes a fixed synthetic voice; DeepL has stated plans to introduce a voice-preservation feature that maintains the speaker's original voice characteristics in the translated output by late 2026.

DeepL enters a market with several well-funded competitors. Sanas, which employs AI to adjust speakers' accents in real time for call center solutions, recently raised $65 million led by Quadrille Capital.

Camb.AI, based in Dubai, focuses on speech synthesis and translation for media dubbing, while Palabra, supported by Reddit co-founder Alexis Ohanian's Seven Seven Six, is developing a real-time speech translation engine aimed at preserving the speaker's voice characteristics.

Google, Microsoft, and Zoom each provide their own meeting translation features, which DeepL concurrently seeks to compete with and integrate into. DeepL’s strategic focus is that translation quality, its most established differentiator, will be a strong counterbalance to the structural advantages held by its competitors in platform distribution.

Other articles

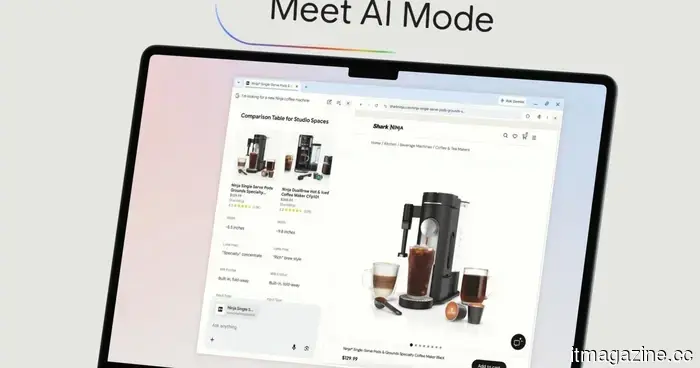

The AI feature in Chrome receives a significant enhancement to reduce the need for switching between tabs.

The AI Mode upgrade for Google Chrome allows you to browse websites and conduct searches simultaneously, enabling you to ask follow-up questions without having to lose your spot or open an additional tab.

The AI feature in Chrome receives a significant enhancement to reduce the need for switching between tabs.

The AI Mode upgrade for Google Chrome allows you to browse websites and conduct searches simultaneously, enabling you to ask follow-up questions without having to lose your spot or open an additional tab.

AlixLabs secures €15 million in Series A funding.

AlixLabs has successfully completed a €15M Series A funding round, supported by Navigare, Industrifonden, Global Brain, and Stephen Industries, to bring its APS™ to market.

AlixLabs secures €15 million in Series A funding.

AlixLabs has successfully completed a €15M Series A funding round, supported by Navigare, Industrifonden, Global Brain, and Stephen Industries, to bring its APS™ to market.

Ericsson barely falls short of Q1 profit expectations as North America sees a decline.

Ericsson's adjusted EBITA for Q1 2026 decreased by 20% to SEK 5.6 billion due to a downturn in North America and an increase in semiconductor expenses. CEO Ekholm attributes this to the demand for AI impacting chip supply.

Ericsson barely falls short of Q1 profit expectations as North America sees a decline.

Ericsson's adjusted EBITA for Q1 2026 decreased by 20% to SEK 5.6 billion due to a downturn in North America and an increase in semiconductor expenses. CEO Ekholm attributes this to the demand for AI impacting chip supply.

A $400 discount on the Samsung Galaxy Z Fold7 makes the most ambitious Android phone of 2025 significantly more accessible.

The Samsung Galaxy Z Fold7 is currently priced at $1,719.99 due to a limited-time offer, which provides a $400 discount from its original price of $2,119.99, and this deal is for the 512GB version that is worth waiting for. Foldable smartphones have significantly advanced in the past two generations, and the Z Fold7 strongly supports the case for the viability of this design.

A $400 discount on the Samsung Galaxy Z Fold7 makes the most ambitious Android phone of 2025 significantly more accessible.

The Samsung Galaxy Z Fold7 is currently priced at $1,719.99 due to a limited-time offer, which provides a $400 discount from its original price of $2,119.99, and this deal is for the 512GB version that is worth waiting for. Foldable smartphones have significantly advanced in the past two generations, and the Z Fold7 strongly supports the case for the viability of this design.

Ericsson falls just short of Q1 profit expectations due to a decline in North America.

In Q1 2026, Ericsson's adjusted EBITA declined by 20% to SEK 5.6 billion due to a downturn in North America and increased semiconductor expenses. CEO Ekholm points to the demand for AI impacting chip availability.

Ericsson falls just short of Q1 profit expectations due to a decline in North America.

In Q1 2026, Ericsson's adjusted EBITA declined by 20% to SEK 5.6 billion due to a downturn in North America and increased semiconductor expenses. CEO Ekholm points to the demand for AI impacting chip availability.

A $400 discount on the Samsung Galaxy Z Fold7 makes the most daring Android phone of 2025 significantly more accessible.

The Samsung Galaxy Z Fold7 is now available for $1,719.99 in a limited-time offer, which is a $400 discount from its original price of $2,119.99. This is the 512GB variant that is definitely worth considering. Foldable phones have significantly developed over the past two generations, and the Z Fold7 strongly demonstrates that the form factor has […]

A $400 discount on the Samsung Galaxy Z Fold7 makes the most daring Android phone of 2025 significantly more accessible.

The Samsung Galaxy Z Fold7 is now available for $1,719.99 in a limited-time offer, which is a $400 discount from its original price of $2,119.99. This is the 512GB variant that is definitely worth considering. Foldable phones have significantly developed over the past two generations, and the Z Fold7 strongly demonstrates that the form factor has […]

DeepL introduces real-time voice-to-voice translation in more than 40 languages.

DeepL has introduced Voice-to-Voice, a real-time spoken translation suite designed for meetings, conversations, and enterprise API usage.