Anthropic secures its largest computing agreement to date with Google and Broadcom as its run rate reaches $30 billion | TNW

In summary, Anthropic has reached an agreement to secure around 3.5 gigawatts of next-generation Google TPU compute capacity through Broadcom starting in 2027, marking its largest infrastructure commitment to date. This announcement also revealed that Anthropic's revenue run rate has exceeded $30 billion, showing significant growth from about $9 billion at the end of 2025.

The AI lab has explained that this substantial compute capacity is driven by its commercial expansion, which necessitates infrastructure that seemed unrealistic two years ago. The deal, revealed on April 6, 2026, builds on the 1 gigawatt already in provision for 2026, allowing Anthropic to leverage this new capacity for training and inference workloads.

According to Krishna Rao, Anthropic's chief financial officer, this represents “our most significant compute commitment to date,” and indicates the company's methodical approach to scaling its infrastructure. Most of this new capacity will be based in the United States, complementing Anthropic’s prior commitment to invest $50 billion in American AI computing facilities announced in November 2025.

The announcement also highlights Broadcom's critical role in this partnership, acting as a bridge between Google’s custom silicon and Anthropic’s computing needs. In conjunction, Broadcom signed a long-term deal with Google to design future custom TPU chips and a supply assurance for networking components through 2031. This makes Broadcom a vital player in AI infrastructure as it focuses on creating the necessary silicon and interconnects rather than building AI models directly. Following the announcement, Broadcom’s shares rose about 3%, reflecting investor interest in companies involved at the foundational layer of the AI ecosystem. Mizuho analysts projected that Broadcom would earn $21 billion in AI revenue from Anthropic in 2026 alone, potentially increasing to $42 billion in 2027, highlighting the financial implications of this collaboration.

Broadcom had previously indicated the significance of its relationship with Anthropic in September 2025, when a substantial order for custom TPU racks was confirmed to be from Anthropic, amounting to $10 billion, followed by an additional $11 billion order. The April 2026 announcement represents a significant step in a partnership that has evolved to include a substantial commitment to multi-gigawatt infrastructure.

Anthropic's compute deal aligns with its rapid revenue growth, as the company has reported that its revenue run rate now exceeds $30 billion, a notable rise from approximately $9 billion at the end of 2025. This rapid increase follows a successful Series G funding round in February 2026, which raised $30 billion at a valuation of $380 billion and was led by GIC and Coatue, along with other co-investors. Since that funding, the number of businesses spending over $1 million annually with Anthropic has surpassed 1,000, doubling in less than two months, thus driving the need for more computational capacity.

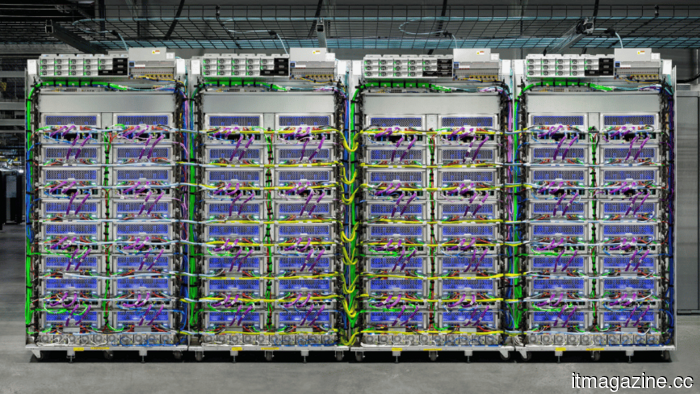

What sets Anthropic's infrastructure strategy apart from its competitors is its multi-vendor chip approach. Claude, Anthropic's AI model, operates across three hardware platforms: Amazon's Trainium, Google’s TPUs, and Nvidia GPUs. This strategy provides Anthropic with both resilience and bargaining power, allowing for workload shifts if any platform experiences capacity issues or supply disruptions. Microsoft’s own AI models show a similar strategy of diversifying dependencies.

Anthropic’s relationship with AWS remains crucial, having identified Amazon as its primary cloud and training partner, with over $8 billion invested by Amazon. A significant project, named Project Rainier, is underway to establish a supercomputer cluster with a large number of Amazon Trainium 2 chips, expected to scale further through 2025. The new Broadcom deal enhances this relationship rather than replacing it.

The April announcement explicitly extends Anthropic’s previous $50 billion commitment to U.S. AI infrastructure, originally developed with Fluidstack in Texas and New York. Broadcom’s new capacity, predominantly based in the U.S., will continue to expand capability into 2027 and beyond, aligning with the U.S. government’s strategic focus on bolstering domestic AI compute capacity under the AI Action Plan.

The agreement reinforces a trend seen over the past 18 months, where AI labs are facing an urgent need for compute capabilities that far exceed revenue financing, similar to previous funding dynamics seen with SoftBank's investment in OpenAI and Meta's infrastructure deal. This increasing compute demand is prompting AI companies to carefully manage their relationships with services based on their models, as seen with Anthropic's recent limitations on Claude's access through third-party frameworks.

For Broadcom, it marks a notable evolution as it has transitioned into a key player in the AI infrastructure sector in a relatively short span. Its role as a custom silicon provider for significant AI models like Google’s Gemini and Anthropic’s Claude underscores a pivotal shift in the semiconductor industry this decade, even as Nvidia remains a dominant force in AI accelerators.

Anthropic secures its largest computing agreement to date with Google and Broadcom as its run rate reaches $30 billion | TNW

Anthropic's run rate reached $30 billion after the company secured a 3.5GW TPU agreement with Google and Broadcom, marking the largest compute commitment in its history, set to go live in 2027.